Last night Tensorflow held the Dev Summit 2017. Although it was in California, they streamed all talks live through Youtube (https://www.youtube.com/watch?v=LqLyrl-agOw). As I use Tensorflow a lot I loved watching some of the talks (together with 2500 other people watching live). This blog post is a summary of the first talks I watched before going to bed.

First of all, it was great watching the announcement of version 1.0 at the start of the conference! Each talk later kept referring back to new features, some of which will already available in the next release!

Tensorboard for debugging and visualisation

Dandelion Mane explained Tensorboard. Up till now I mainly used it as a cool visualization tool for other people (impressing your boss and showing co-workers how cool your network is). Dandelion also showed how using Tensorboard is very practical for debugging your model if it is not learning. He started by creating a graph with an error (which I did not spot) and shows the model is not training. He shows that through the use of Tensorboard it is easy to find where you make this error. I know I will be using Tensorboard next time I have a problem.

Another thing I did not know was in there is the embedding visualiser. He showed the principal components of MNIST in 3D and was able to create a 3D cloud of smart replies Google Inbox. This feature really helps you when you want to know what your model learned exactly, and where mistakes come from. You can find the code and slides of Dandelion here: https://gist.github.com/dandelionmane/4f02ab8f1451e276fea1f165a20336f1#file-mnist-py

High level libraries

Francois Chollet talked about the integration of high-level libraries in Tensorflow. Although TFLearn, TFSlim, and SKFlow are already available, I still used Keras for quickly developing a quick model. The Tensorflow guys took the ideas of Keras and implemented them directly into Tensorflow in an efficient manner. Most interesting about this is that Keras includes all best practices, for example, it initialises your weights in a way that makes sense.

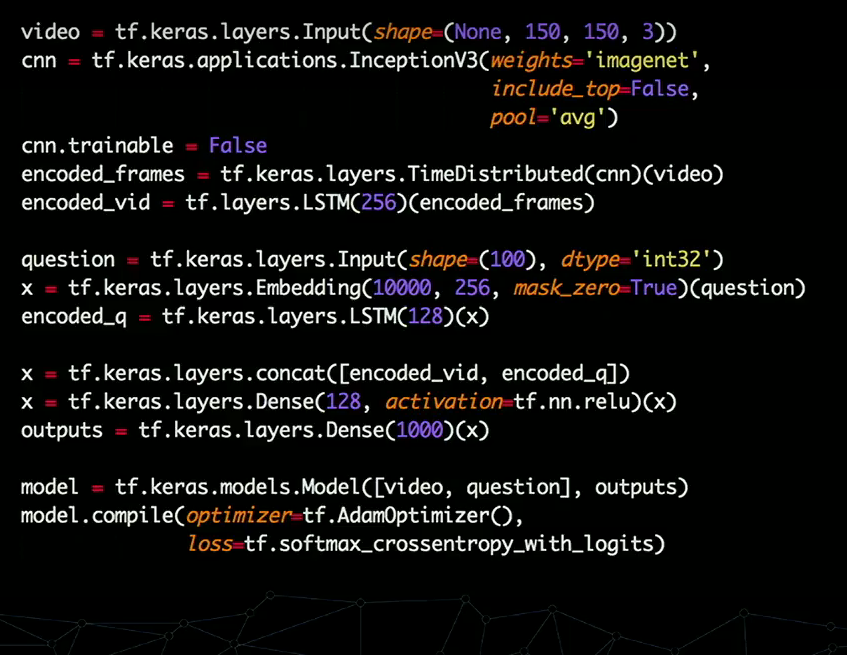

Another awesome development are the canned estimators. These are basically models in a box, ready to use in your model. At the end of his talk Francois created a network for what seems to be a difficult task: show a computer a video, ask a question, and let the computer answer with a single word. The resulting code fit in this single slide:

Unfortunately, I am in the wrong timezone for watching the whole summit live, but I will surely watching the rest of the talks later this week. If this post peaked your interest:

- The schedule can be found here: https://events.withgoogle.com/tensorflow-dev-summit/agenda/#content

- And the video here https://www.youtube.com/watch?v=LqLyrl-agOw

Let me know what your thoughts were on the conference, and what talk you enjoyed the most!

Facebook Comments (

G+ Comments ()